Read an article this week in Blocks and Files, Facebook’s Kangaroo jumps over flash limitations which spiked my interest and I went and searched for more info on this and found a fb blog post, Kangaroo: A new flash cache optimized for tiny objects which sent me to an ACM SOSP (Syposium on O/S Principles) best paper of 2021, Caching billions of tiny objects on flash.

First, as you may recall flash has inherent limitations when it comes to writing. The more writes to a flash device the more NAND cells start to fail over time. Flash devices are only rated for some amount (of standard, ~4KB) block writes, For example, the Micron 5300 Max SSD only supports 3-5 (4KB blocks) DWPD (drive writes per day). So, a 2TB Micron Max 5300 SSD can only sustain from ~1.5 to 2.4B 4KB block writes per day. Now that seems more than sufficient for most work but when somebody like fb, using the SSD as a object cache, writes a few billion or more 100B(yte) objects and does this day in or day out, can consume an SSD in no time. Especially if they are writing one 100 B object per block

So there’s got to be a better way to cache small objects into bigger blocks. Their paper talks of two prior approaches:

- Log structured storage – here multiple 100B objects are stored in a single a 4KB block and iwritten out with one IO rather than 40. This works fairly well but the index ,which maps an object key, to a log location, takes up a lot of memory space. If your caching ~3B 100B objects in a logs and each object index takes 16 bytes that’s a data space of 48GB.

- Associative set storage – here each object is hashed into a set of (one or more) storage blocks and is stored there. In this case there’s no DRAM index but you do need a quick way to determine if an object is in the set storage or not. This can be done with bloom filters (see: wikipedia article on bloom filters). So if each associative set stores 400 objects and one needs to store 3B objects one needs a 30 MB of bloom filters (assuming 4bytes each). The only problem with associative sets is that when one adds an element to a set. the set has to be rewritten. So if over time you add 400 objects to a set you are writing that set 400 times. All of which eats into the DWPD budget for the flash storage.

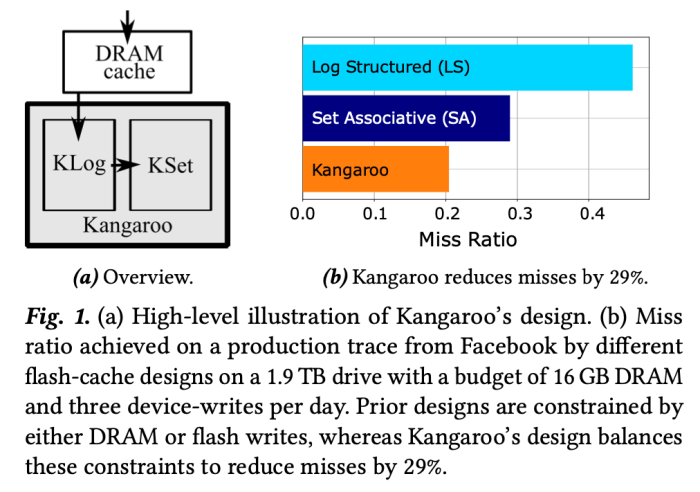

In Kangaroo, fb engineers have combined the best of both of these together and added a small DRAM cache.

How does it work?

Their 1st tier is a DRAM cache, which is ~1% of the capacity of the whole object cache. Objects are inserted into the DRAM cache first and are evicted in a least recently used fashion, that is object’s that have not been used in the longest time are moved out of this cache and are written to the next layer (not quite but get to that in a moment).

Their 2nd tier is a log structured system, at ~5% of cache capacity. They call this a KLog and it consists of a ring of 4KB blocks on SSD, with a DRAM index telling where each object is located on the ring.. Objects come in and are buffered together into a 4KB block and are written to the next empty slot in the ring with its DRAM index updated accordingly. Objects are evicted from Klog in such a way that a group of them, that would be located in the same associative set and are LRU, can all be evicted at the same time. They have structured the Klog DRAM index so that it makes finding all these objects easy. Also any log structured system needs to deal with garbage collection, Let’s say you evict 5 objects in a 4K block, that leaves 35 that are still good. Garbage collection will read a number of these partially full blocks and mash all the good objects together leaving free space for new objects that need to be cached.

The 3rd and final tier is a set associative store, they call the Kset that uses bloom filters to show object presence. For this tier, an object’s key is hashed to find a block to put it in, the block is read and the object inserted and the block rewritten. Objects are evicted out of the set associative store based on LRU within a block. The bloom filters are used to determine if the object exists in an set associative block.

There are a few items missing from the above description. As can be seen in Figure 3B above, Kangaroo can jettison objects that are LRUed out of DRAM instead of adding them to the Klog. The paper suggests this can be done purely at random, say only admit, into the Klog, 95% of the objects at random being LRUed from DRAM. The jettison threshold for Klog to Kset is different. Here they will jettison single object sets. That is if there were only one object that would be evicted and written to a set, it’s jettisoned rather than saved in the Kset. The engineers call this a Kset threshold of 2 (indicating minimum number of objects in a single set that can be moved to Kset)..

While understanding an objects LRU is fairly easy if you have a DRAM index element for each block, it’s much harder when there’s no individual object index available, as in Kset.

To deal with tracking LRU in the Kset, fb engineers created a RRIParoo index with a DRAM index portion and a flash resident index portion.

- RRIParoo’s DRAM index is effectively a 40 byte bit map which contains one bit per object, corresponding to its location in the block. A bit on in this DRAM bitmap indicates that the corresponding object has been referenced since the last time the flash resident index has been re-written. .

- RRIParoo’s flash resident index contains 3 bit integers, each one corresponding to an object in the block. This integer represents how many clock ticks, it has been since the corresponding object has been referenced. When the need arises to add an object to a full block, the object clock counters in that block’s RRIP flash index are all incremented until one has gotten to the oldest time frame b’111′ or 7. It is this object that is evicted.

New objects are given an arbitrary clock tick count say b’001′ or 1 (as shown in Fig. 6, in the paper they use b’110′ or 6), which is not too high to be evicted right away but not too low to be considered highly referenced.

How well does Kangaroo perform

According to the paper using the same flash storage and DRAM, it can reduce cache miss ratio by 29% over set associative or log structured cache’s alone. They tested this by using simulations of real world activity on their fb social network trees.

The engineers did some sensitivity testing using various Kangaroo algorithm parameters to see how sensitive read miss rates were to Klog admission percentage, RRIParoo flash index element (clock tick counter) size, Klog capacity and Kset admission threshold.

Applications of the technology

Obviously this is great for Twitter and facebook/meta as both of these deal with vast volumes of small data objects. But databases, Kafka data streams, IoT data, etc all deal with small blocks of data and can benefit from better caching that Kangaroo offers.

Storage could also use something similar only in this case, a) the objects aren’t small and b) the cache is all in memory. DRAM indexes for storage caching, especially when we have TBs of DRAM cache, can be still be significant, especially if an index element is kept for each block in cache. So the technique could also be deployed for large storage caches as well.

Then again, similar techniques could be used to provide caching for multiple tiers of storage. Say DRAM cache, SSD Log cache and SSD associative set cache for data blocks with the blocks actually stored on large disks or QLC/PLC SSDs.

Photo credit(s):

- Figure 3 from Facebook’s ACM SOSP paper, Caching billions of tiny objects on flash

- Figure 6 from Facebook’s ACM SOSP paper, Caching billions of tiny objects on flash

- Figure 1 from Facebook’s ACM SOSP paper, Caching billions of tiny objects on flash

- Figure 12 from Facebook’s ACM SOSP paper, Caching billions of tiny objects on flash