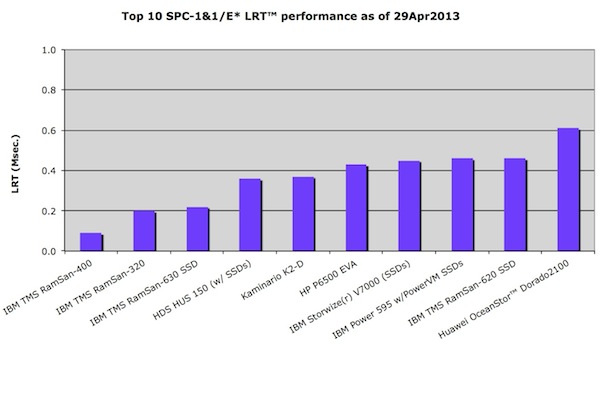

In the figure above you can see one of the charts from our latest performance dispatch on SPC-1 and SPC-2 benchmark results. The above chart shows SPC-2 throughput results sorted by aggregate MB/sec order, with all three workloads broken out for more information.

In the figure above you can see one of the charts from our latest performance dispatch on SPC-1 and SPC-2 benchmark results. The above chart shows SPC-2 throughput results sorted by aggregate MB/sec order, with all three workloads broken out for more information.

Just last quarter I was saying it didn’t appear as if any all-flash system could do well on SPC-2, throughput intensive workloads. Well I was wrong (again) and with an aggregate MBPS™ of ~33.5GB/sec. Kaminario’s all-flash K2 took the SPC-2 MBPS results to a whole different level, almost doubling the nearest competitor in this category (Oracle ZFS ZS3-4).

Ok, Howard Marks (deepstorage.net), my GreyBeardsOnStorage podcast co-host and long-time friend, had warned me that SSDs had the throughput to be winners at SPC-2, but they would probably cost to much to be viable. I didn’t believe him at the time — how wrong could I be.

As for cost, both Howard and I misjudged this one. The K2 came in at just under a $1M USD, whereas the #2, Oracle system was under $400K. But there were five other top 10 SPC-2 MPBS systems over $1M so the K2, all-flash system price was about average for the top 10.

Ok, if cost and high throughput aren’t the problem why haven’t we seen more all-flash systems SPC-2 benchmarks. I tend to think that most flash systems are optimized for OLTP like update activity and not sequential throughput. The K2 is obviously one exception. But I think we need to go a little deeper into the numbers to understand just what it was doing so well.

The details

The LFP (large file processing) reported MBPS metric is the average of 1MB and 256KB data transfer sizes, streaming activity with 100% write, 100% read and 50%:50% read-write. In K2’s detailed SPC-2 report, one can see that for 100% write workload the K2 was averaging ~26GB/sec. while for the 100% read workload the K2 was averaging ~38GB/sec. and for the 50:50 read:write workload ~32GB/sec.

On the other hand the LDQ workload appears to be entirely sequential read-only but the report shows that this is made up of two workloads one using 1MB data transfers and the other using 64KB data transfers, with various numbers of streams fired up to generate stress. The surprising item for K2’s LDQ run is that it did much better on the 64KB data streams than the 1MB data streams, an average of 41GB/sec vs. 32GB/sec.. This probably says something about an internal flash data transfer bottleneck at large data transfers someplace in the architecture.

The VOD workload also appears to be sequential, read-only and the report doesn’t indicate a data transfer size but given K2’s actual results, averaging ~31GB/sec it would seem to indicate it was on the order of 1MB.

So what we can tell is that K2’s SSD write throughput is worse than reads (~1/3rd worse) and relatively smaller sequential reads are better than relatively larger sequential reads (~1/4 better). But I must add that even at the relatively “slower write throughput”, the K2 would still have beaten the next best disk-only storage system by ~10GB/sec.

Where’s the other all-flash SPC-2 benchmarks?

Prior to K2 there was only one other all-flash system (TMS RamSan-630) submission for SPC-2. I suspect that writing 26 GB/sec. to an all-flash system would be hazardous to its health and maybe other all-flash storage system vendors don’t want to encourage this type of activity.

Just for the record the K2 SPC-2 result has been submitted for “review” (as of 18Mar2014) and may be modified before finally “accepted”. However, the review process typically doesn’t impact performance results as much as other report items. So, officially, we will need to await for final acceptance before we can truly believe these numbers.

Comments?

~~~~

The complete SPC performance report went out in SCI’s February 2014 newsletter. But a copy of the report will be posted on our dispatches page sometime next quarter (if all goes well). However, you can get the latest storage performance analysis now and subscribe to future free newsletters by just using the signup form above right.

Even more performance information and OLTP, Email and Throuphput ChampionCharts for Enterprise, Mid-range and SMB class storage systems are also available in SCI’s SAN Buying Guide, available for purchase from website.

As always, we welcome any suggestions or comments on how to improve our SPC performance reports or any of our other storage performance analyses.